Problems in statistics

Something which often frustrates me is seeing how many statistical models are wrong. I am referring to scientific journals, but also commercial models.

Something which you’re going to ask me is what I mean “wrong”. Some might argue that models can’t be wrong or right. They simply are what they are, and you judge them by their performance. However, things are not as straightforward for statistics. Let’s dive deep and examine more.

The role of statistics in data science

The role of statistics in data science is inference. This comes in contrast to machine learning whose role is mostly focused around predictive power. I have talked about this difference in my post Statistics vs Machine learning: The two worlds. So, what does inference mean?

In statistics we are interested in extracting information from a sample of data in order to reach conclusions about certain hypotheses. What does this mean?

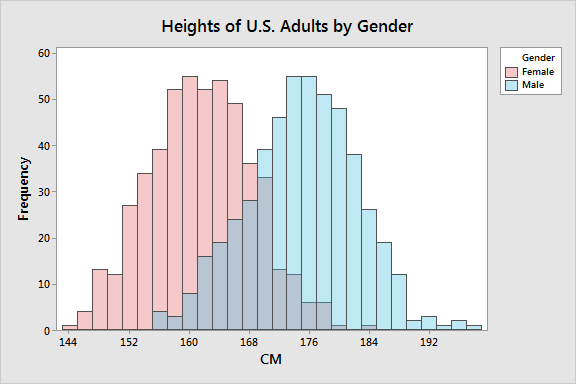

The most common example is hypothesis testing. Let’s say that you want to understand what is the average height of a population. Instead of measuring every individual in this population you can simply take, let’s say 1% of the population and infer what the average height is.

The challenging bit around inference is that it is usually based upon certain assumptions. Unless these assumptions are met, then the models are incorrect.

For example, I have written in the past about the assumptions of linear regression and how you can test them. Some common assumptions of many statistical models include the assumption of underlying normality (the underlying distribution is normal), and the homogeneity of variances.

Issues with statistical assumptions and p-value hacking

So, there are two main issues I see with the application of statistical models.

The first issue is that many practitioners simply forget to test the assumptions and report them! This is plain wrong and lead to false conclusions.

The second problem I’ve seen happening very often is p-value hacking. This is a phenomenon that, unfortunately, is happening in many published papers. Researchers might try to replicate an experiment again and again, until they reach a point where they get good results and simply report these. There are cases where someone might genuinely report wrong results, many of these cases are the result of fraud.

The solution?

So, what can someone do? Whether you are a practitioner, or a consumer of statistical research, I believe there is only one solution, and that is proper education on the topic. It is one the reason I have been running all sorts of courses: from courses for aspiring data scientists to courses for people with no technical expertise. If you want to learn more, make sure to get in touch!