Interpretable machine learning is currently one of the hottest areas in machine learning. We have talked in another post about how interpretable machine learning can help you veer into the black box that most algorithms are, understand why they make decisions and where they falter.

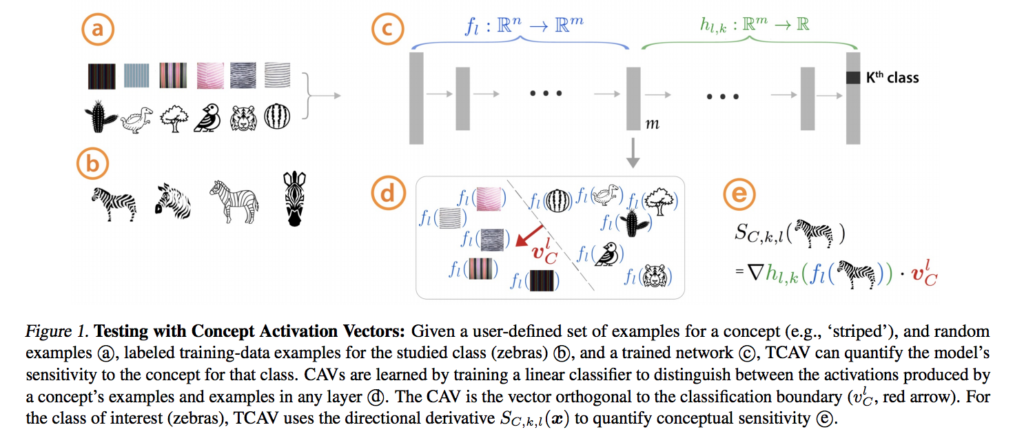

Interpretable machine learning is of great interest in multiple applications, which is why all the big companies have started investing more resources into it. For example, Been Kim from Google Brain recently gave an interview, taking about the research taking place in Google around interpretable machine learning, and the development of a new algorithm called Quantitative Testing with Concept Activation Vectors (TCAV).

Interpretable machine learning is directly related to the topic of bias in algorithms. There have been many calls for algorithmic accountability in the last few years. For example, Amazon made the headlines when they had to scrap a recruitment tool they used to automatically screen CVs.

The problem behind this model was that most of the data the model was trained on was coming from CVs submitted by male applicants. Therefore, the model associated being male with being more likely to be accepted for a technical role. Therefore, the system was unfairly discriminating against female candidates. Amazon tried to fix the system, but in the end there was no guarantee that biases of other forms might have played a role in the system’s decisions. In the end, they decided to start the project again from scratch.

For this reason, IBM recently released an open source toolkit for algorithmic fairness called AI Fairness 360 (or AIF360 for short). According to IBM

The AI Fairness 360 Python package includes a comprehensive set of metrics for datasets and models to test for biases, explanations for these metrics, and algorithms to mitigate bias in datasets and models.

The package includes, indeed many algorithms that can test bias such as adversarial debiasing (a method based on generative adversarial neural networks), and calibrated odds post-processing.

I recently had the chance to speak on Bloxlive TV about this toolkit and algorithmic bias. You can watch the full interview here.